Building integrations that move money, documents, and data for a regulated NBFC.

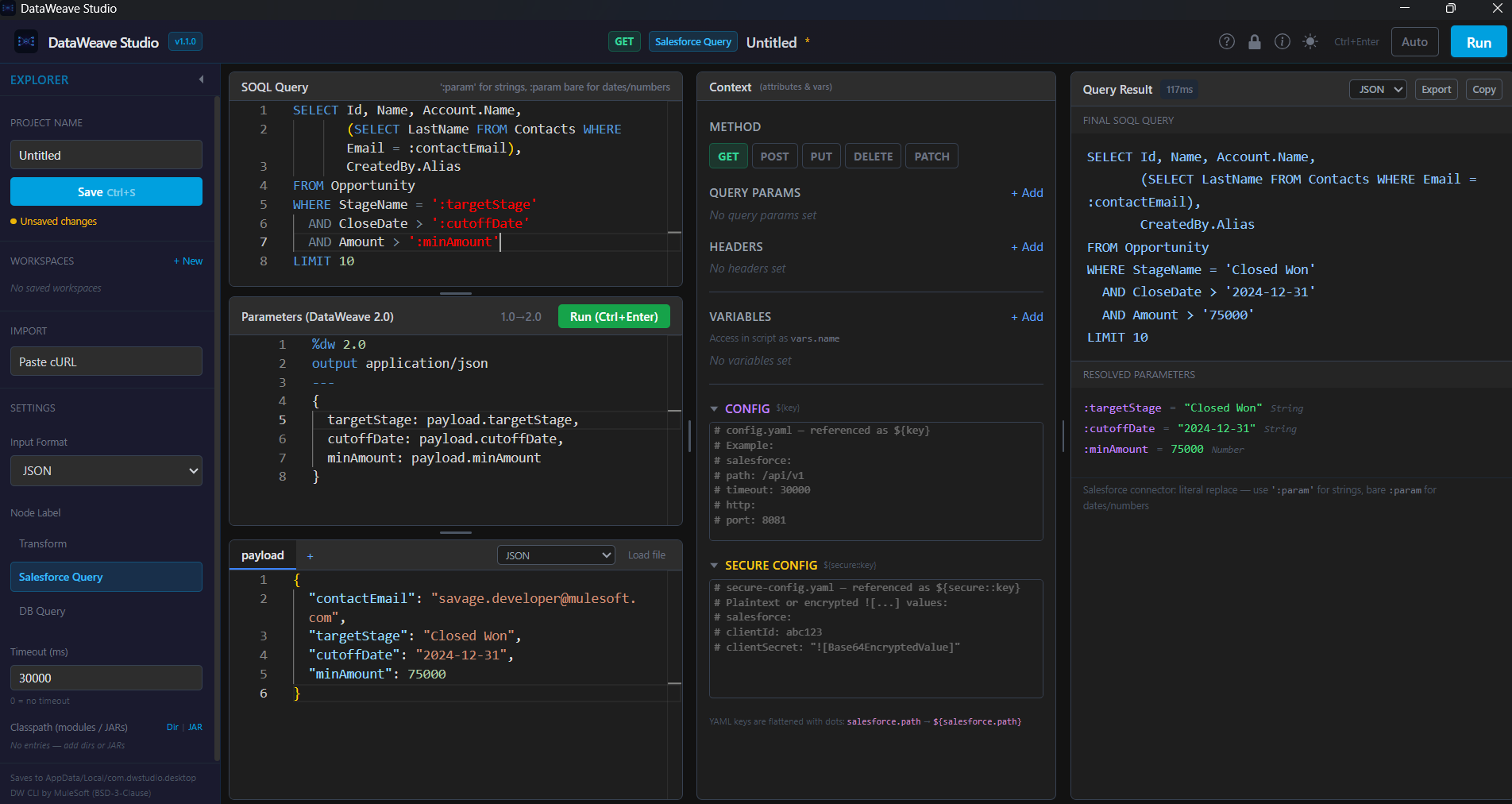

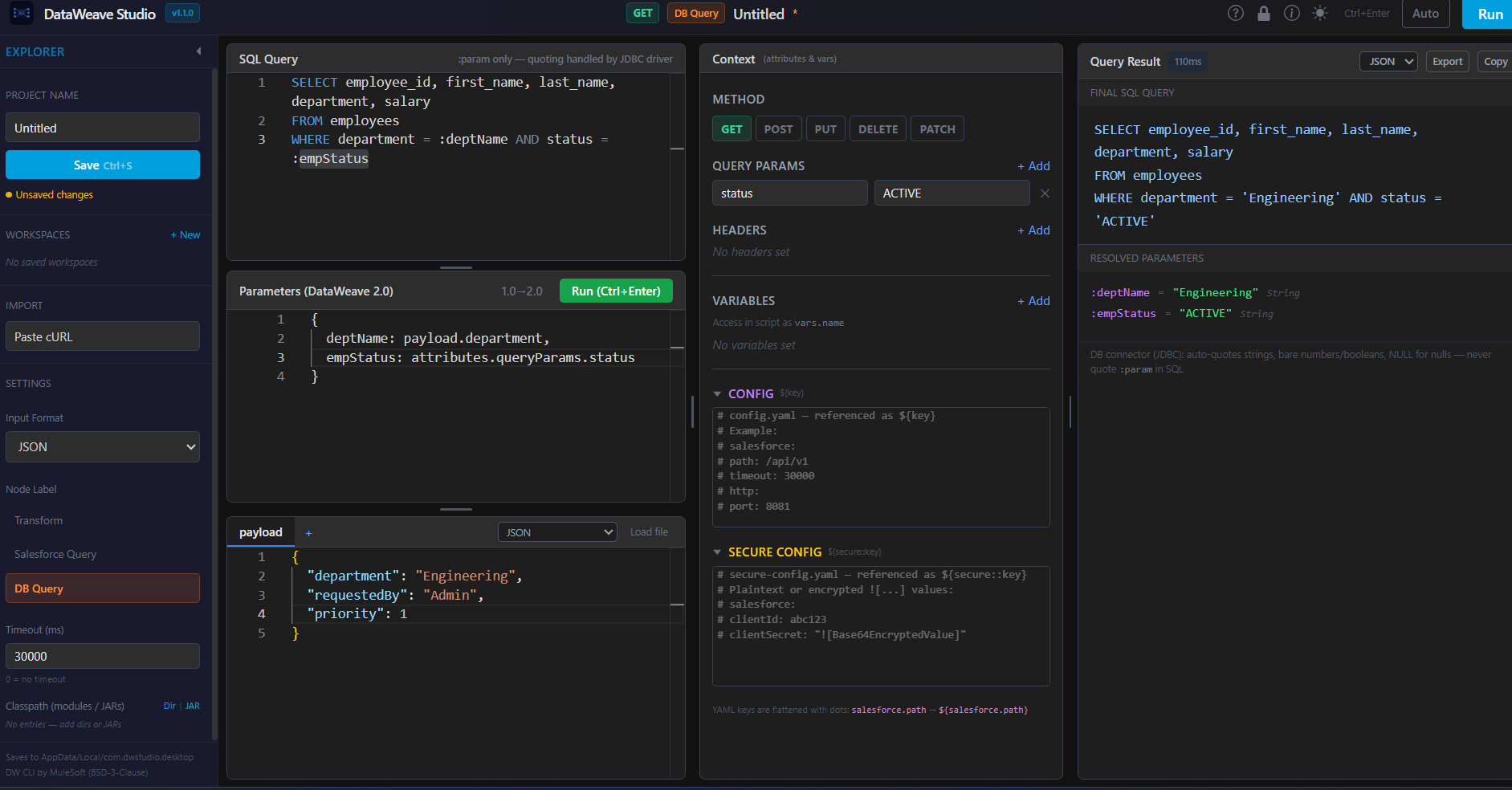

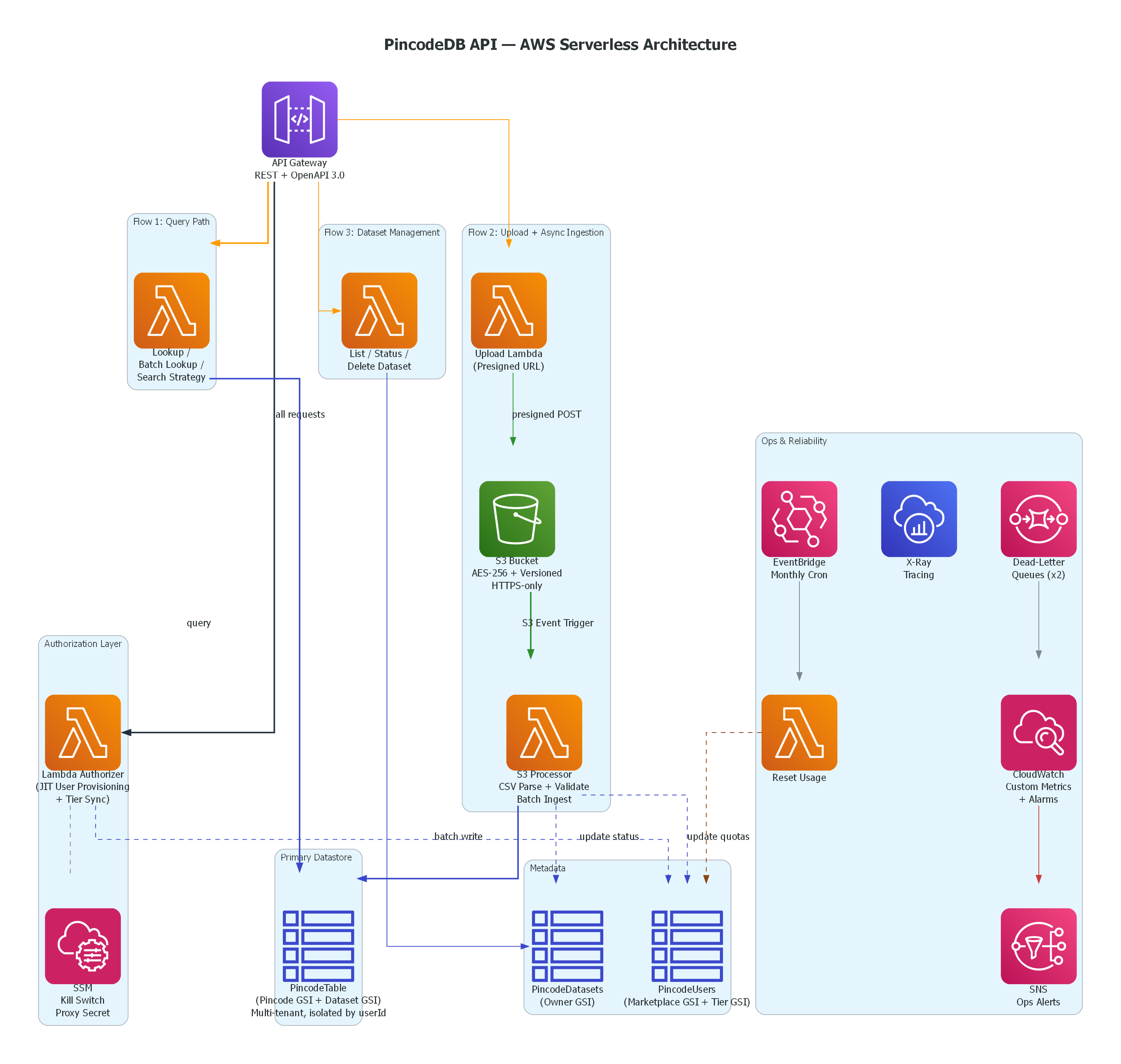

I'm an Integration Engineer at a housing finance NBFC regulated by the RBI, and the primary MuleSoft developer on the team. I own 40+ APIs and 720+ endpoints across a four-server on-prem production environment — system APIs, process APIs, experience APIs — serving 1 lakh+ daily transactions.

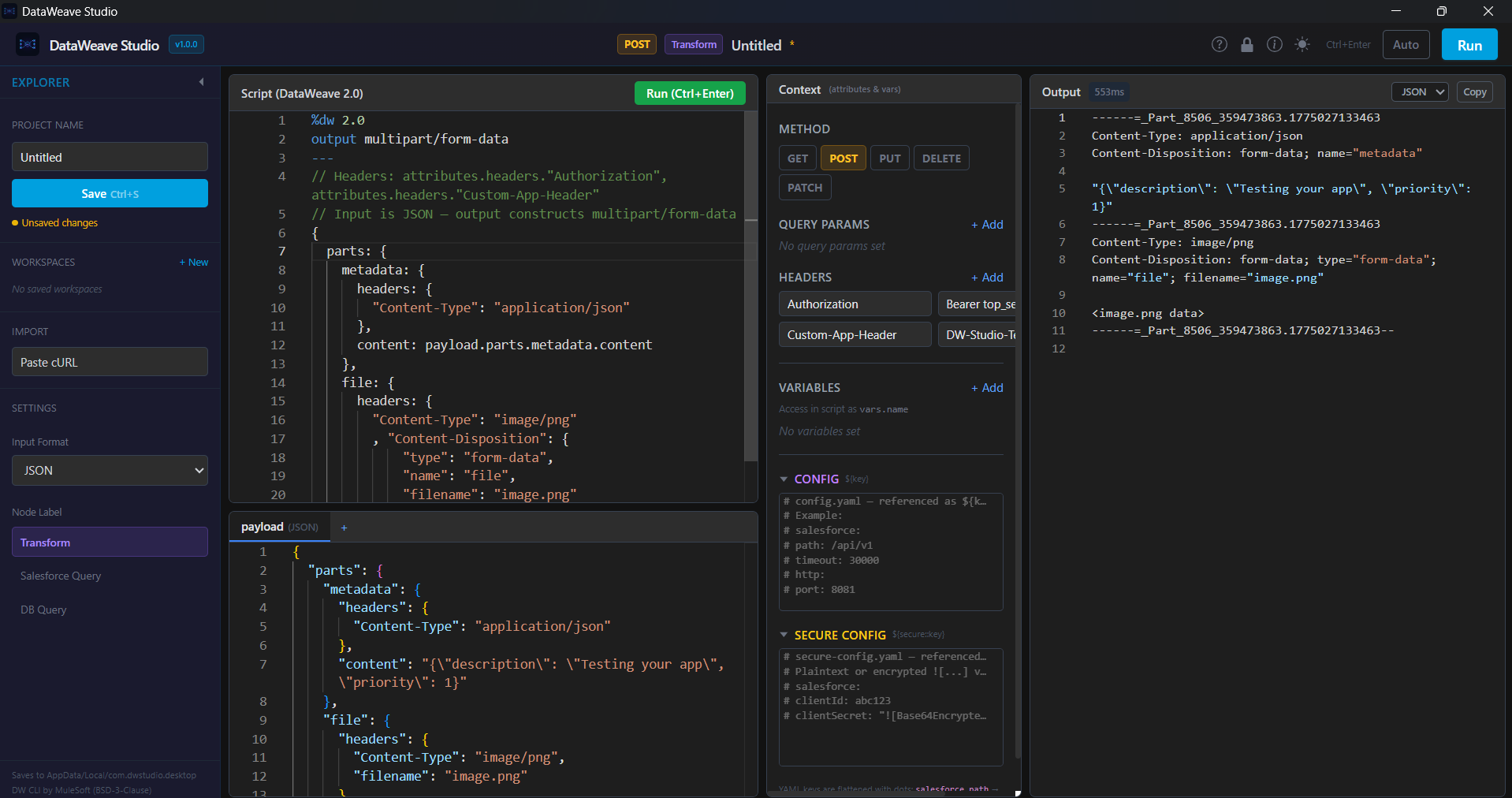

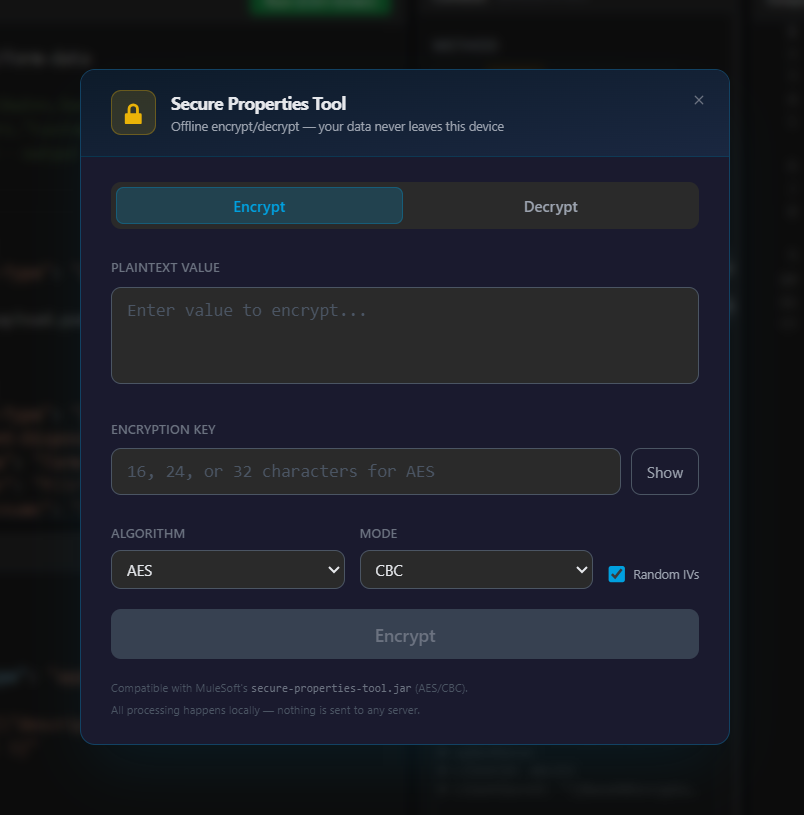

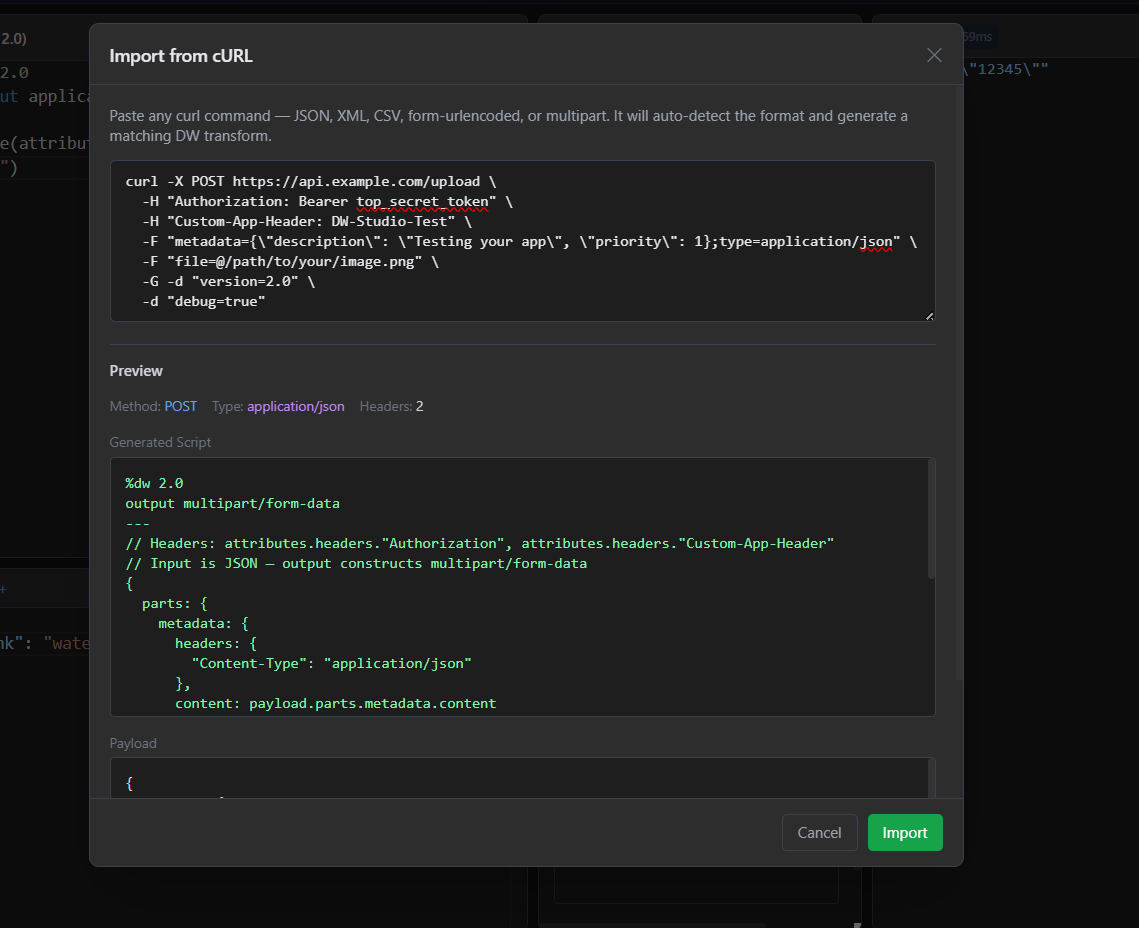

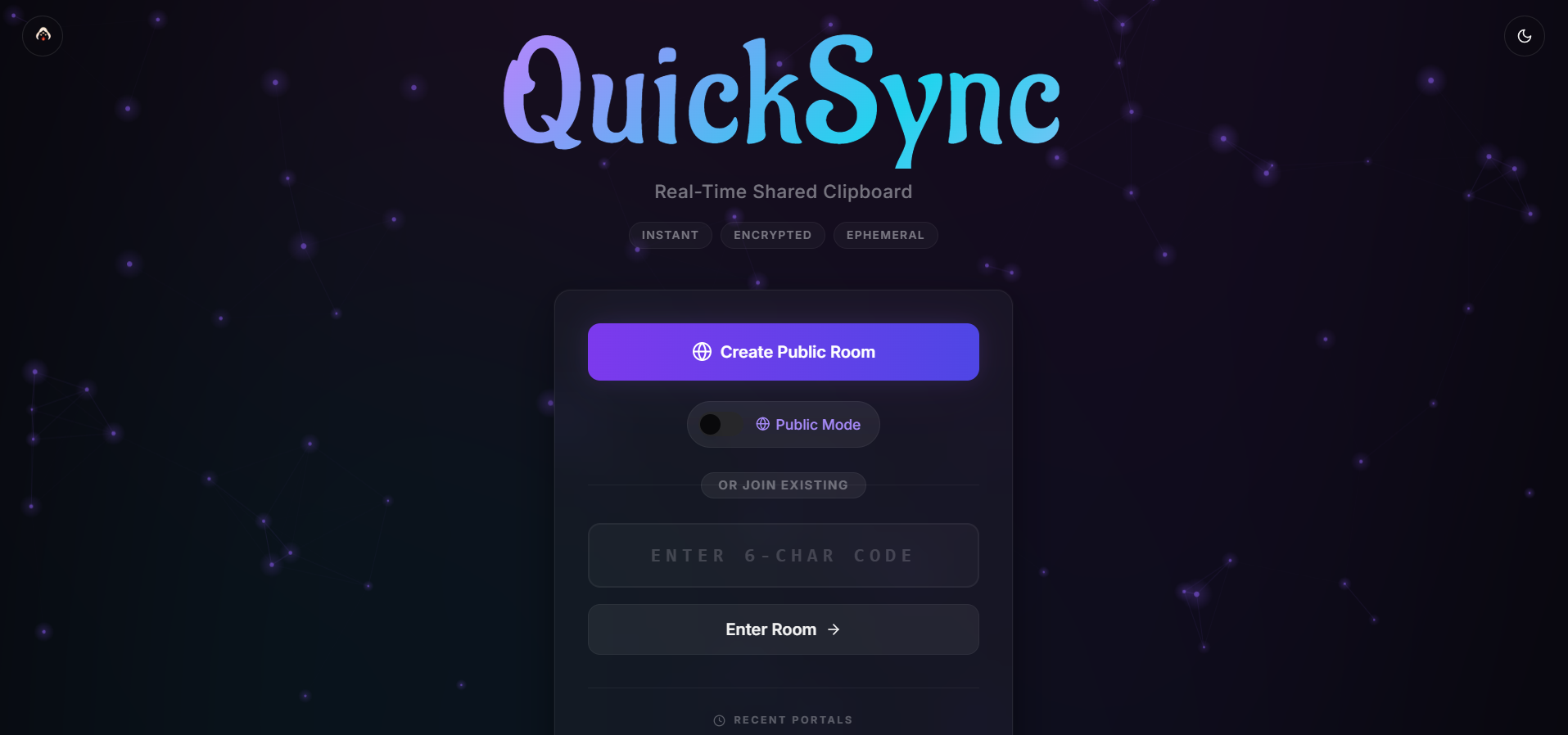

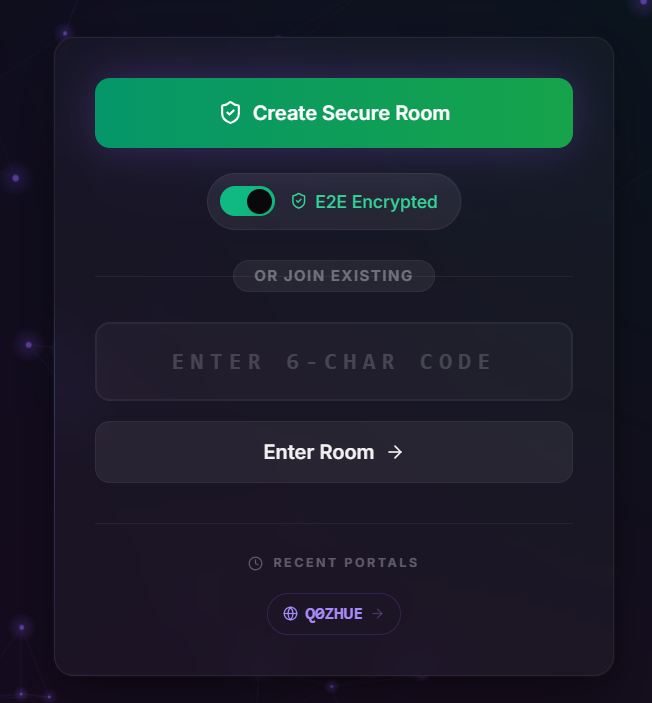

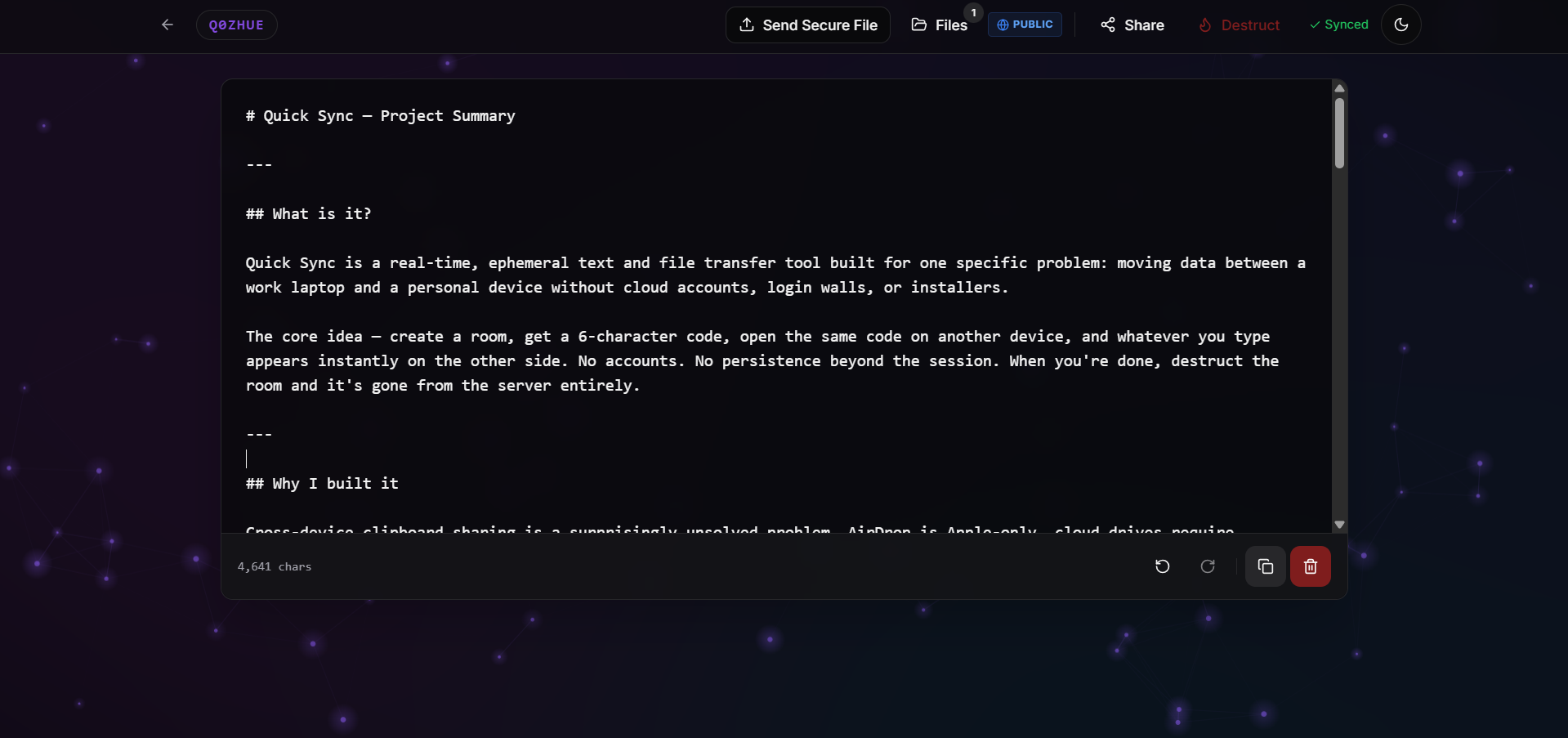

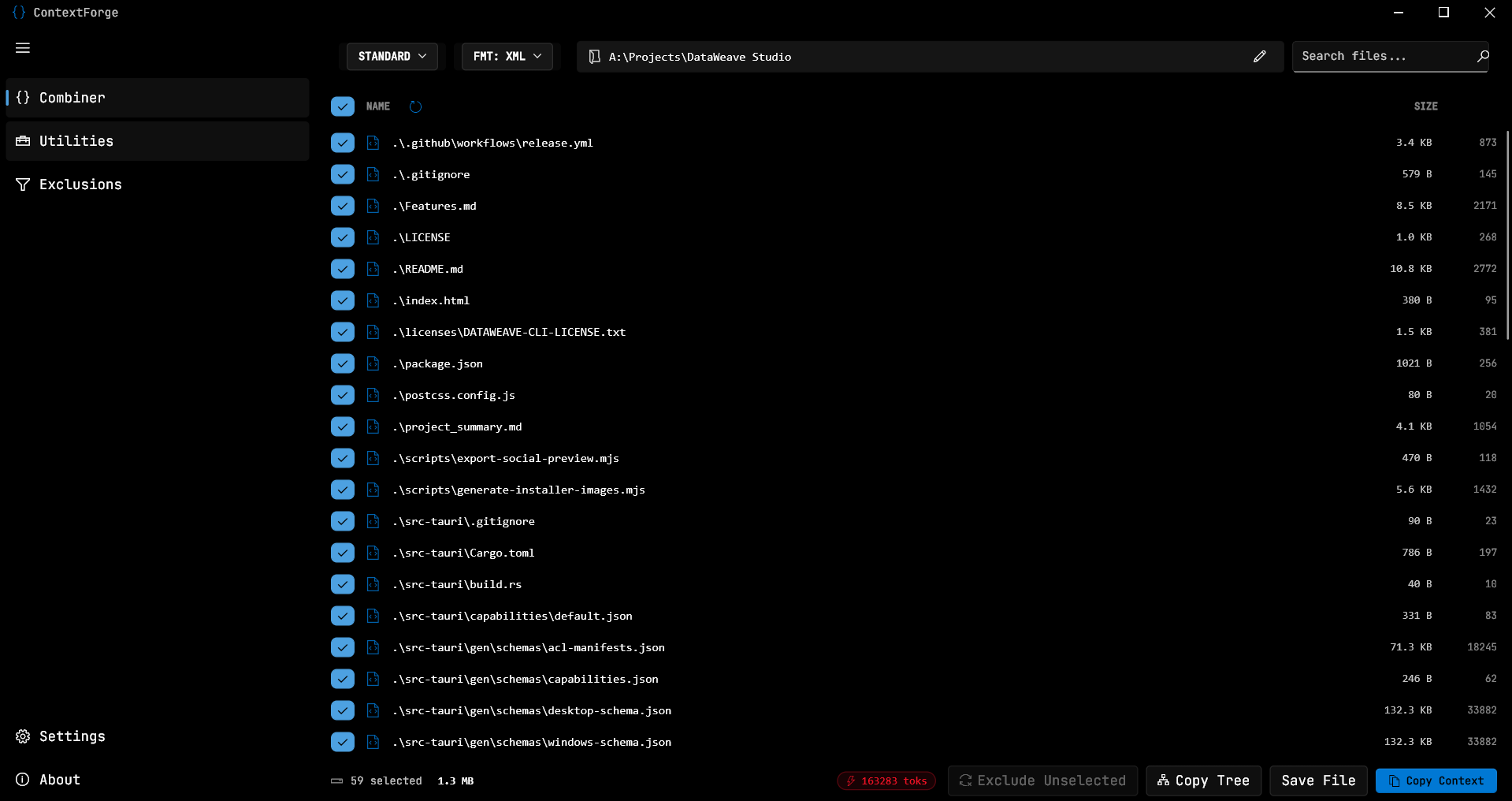

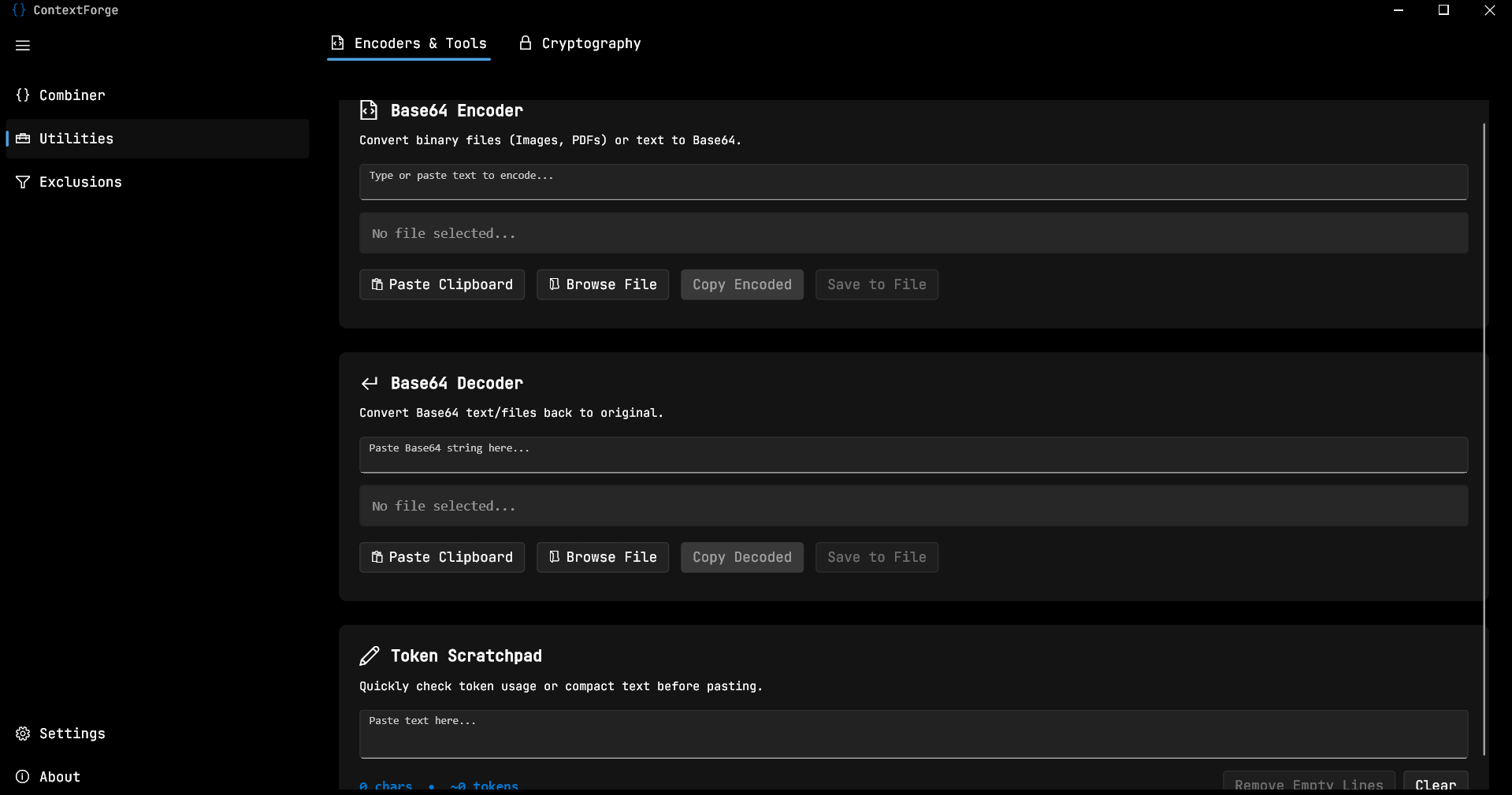

My day job is designing integration flows, writing DataWeave, managing Salesforce connectivity, handling VAPT remediation, and keeping production stable while shipping. Outside of work, I build developer tools and open-source projects that solve problems I actually run into.